You can find out more about Woebot here.

You can find out more about Biobeat here .

You can find out more about “How We Feel” here.

Storytelling from  &

&

It is clear that the ever-increasing demand for mental health support often goes above and beyond our current resources. The endless waiting lists for therapy is a clear problem for all ages. Can technology, specifically artificial intelligence (AI), hold the key to addressing the mental health crisis? Can we entrust robots and wearable devices with the task of not only providing support but also revolutionising our approach to understanding and managing our emotions? This Curious Life explores AI’s potential in mental healthcare and asks the question, can AI offer the answers how to enhance our emotional well-being and overall mental health at every stage of our lives.

Photography by Alex Shuper on Unsplash

Here are a few intriguing projects that could spark your curiosity; they have certainly caught our attention:

AI therapists.

Living in a world marked by a shortage of therapists and counsellors, limited appointments, and long waitlists, the challenges in delivering mental health care are substantial, and holding back progress in achieving health equity. Could talking to a robot rather than a human be a solution? Meet Woebot, an AI-powered chatbot rooted in Cognitive Behavioral Therapy (CBT) principles. Woebot is designed to help users manage distressing thoughts and emotions. Although it isn’t intended for emergencies or acute situations, it could be a perfect support in times of need of ‘someone’ to ease your mind. Founder Alison Darcy makes a good case giving the example of at 2 am, when nobody else is around, often when our darkest moments hit, Woebot is always there to support you at those times.

Photography by Joshua Chehov

Wearable support.

The development of AI-driven mental health solutions also comes in the form of wearable devices. These devices include sensors which interpret physiological signals and offer proactive support. Take Biobeat, for example. It gathers data on sleep patterns, physical activity, and changes in heart rate and rhythm to monitor the user’s emotional state and cognitive well-being. This data is then compared with combined and anonymised information from other users to create early warning signals, indicating when some form of help or support might be necessary. Users can then respond to this information by making behavioural changes themselves or seek assistance from healthcare services where necessary.

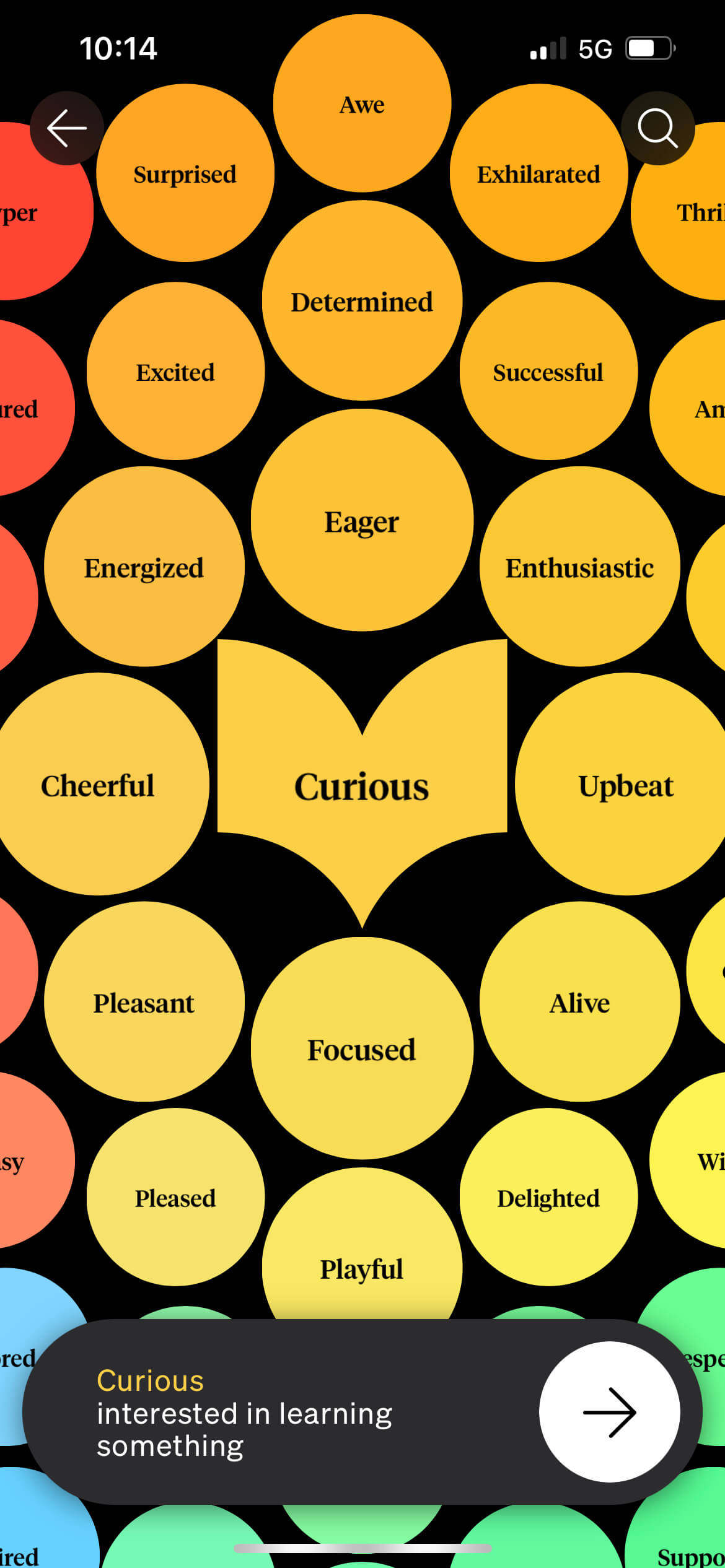

How We Feel app.

Technology for emotional understanding.

Innovation in understanding emotions is driving the development of technology-enabled emotional strength-building tools like the ‘How We Feel’ app. Highlighting the potential of emotions in personal growth and good societal mental health, Marc Brackett, the founding director of the Yale Center for Emotional Intelligence, has a vision where all of us become an “emotional scientist.” The How We Feel Project is a nonprofit organisation created by scientists, designers, engineers, and therapists to help everyone better understand their own emotions. It is designed to help us spot patterns as they appear over time and learn new techniques on how to deal with different emotions in the moment.

AI for personalised plans.

Creating custom treatment plans is now becoming possible with the help of AI using patient data to create treatment plans that are uniquely tailored. Machine learning algorithms are used to analyse a wide range of personal information, from genetics and medical history to lifestyle to work out the best treatments. By examining this data, AI can propose personalised interventions, making treatment strategies potentially more effective.

Diagnosis and early intervention.

AI is also showing promise in identifying mental health issues at an early stage. Through the analysis of a range of data sources, AI is showing good accuracy in predicting conditions such as suicidal thoughts, depression, and schizophrenia. This is exciting as timely interventions can halt the progression of these conditions, preventing them from becoming more severe.

Photography by Markus Spiske

There are, however, some concerns, especially when it comes to AI bias and data quality issues. These concerns are crucial as they can result in predictions that are not dependable and may even perpetuate social biases such as ageism, as we’ve seen is a concern in current mental health diagnoses (you can read a previous This Curious Life story on ageism in mental health here).

How can we ensure that AI is used as a force for good in mental healthcare, benefiting patients while avoiding potential pitfalls? It's a crucial question that we need to tackle.

The way forward should involve encouraging collaboration between AI researchers and healthcare professionals to create AI models that are both fair and accurate. In the field of mental health diagnosis, which often relies on subjective judgments based on patients’ self-reported experiences, careful monitoring and follow-up procedures are essential. By working together, AI and healthcare professionals could be able to unlock the full potential of AI while minimising potential risks.

AI holds significant promise in enhancing mental healthcare through improved diagnosis, personalised treatments, and patient support. However, it also brings challenges related to bias, subjectivity, and ethical considerations that demand close attention in both its development and implementation.

How can we ensure that AI is used as a force for good in mental healthcare, benefiting patients while avoiding potential pitfalls? It’s a crucial question that we need to tackle.